Artificial intelligence is all the hype lately. Behind many of the mind-blowing breakthroughs of the past decade is a single workhorse: More compute. As engineers work hard to supply the necessary electronics, researchers are turning to less conventional ideas in hopes of finding the next big thing. We showed how to employ the complex computations nature does, free-of-charge, for neural networks.

Views 6085

Reading time 4 min

published on Jan 27, 2023

Models of neural networks are used ubiquitously in science and technology these days. Such artificial neural networks are computer algorithms inspired by the way the human brain works. While they enable revolutionary technology, their execution on digital computers eats up increasingly large amounts of energy and time, hampering progress on understanding and improving these algorithms. Yet before digital computers were cheap and widely available, engineers and scientists mostly used a different kind of computer, which scientists are revisiting in light of ever-more energy-hungry artificial neural networks.

To simulate complex phenomena like the erosion of riverbeds or the flight of an airplane, small models of these systems were built in the lab, like hydraulic flumes or wind-tunnels. The advantage is that once such a system is set up, it is incredibly efficient at simulating what it was built for: E.g., in a hydraulic flume, water flows down the artificial riverbed and erosion just happens. Such “computers” are sometimes called analog computers, not just because they are not digital, but because they rely on a mathematical analogy between the simulated and the simulating system. In contrast, simulations on digital supercomputers cost plenty of energy and can take anywhere from a few minutes to several months. Yet, the flexibility that digital computers offer over analog computers almost always tips the scale in their favor. To get reliable answers from an analog computer, much effort is put into engineering the analogy to high accuracy. On a digital computer, programmers can do that on the fly.

Given their ubiquitous use, a fast and energy-efficient analog model of a neural network could be widely applicable, though. In our work, we set out to build the equivalent of a wind tunnel for an artificial neural network and demonstrate a general way for scientists to construct such models.

A surprising finding that the field of artificial intelligence is thriving on is that even complex tasks can be broken down into many automatically trained simple operations. For the brain to tell whether it is looking at a cat or a dog, attention is paid to characteristic features, while irrelevant information like a sofa in the background is blinded out. Artificial neural networks realize this process by propagating information through various layers of abstraction. Each layer only does very simple operations, e.g. recognizing an oval as a head, line-like objects as limbs, etc. However, a sequence of many such simple operations can solve complex tasks.

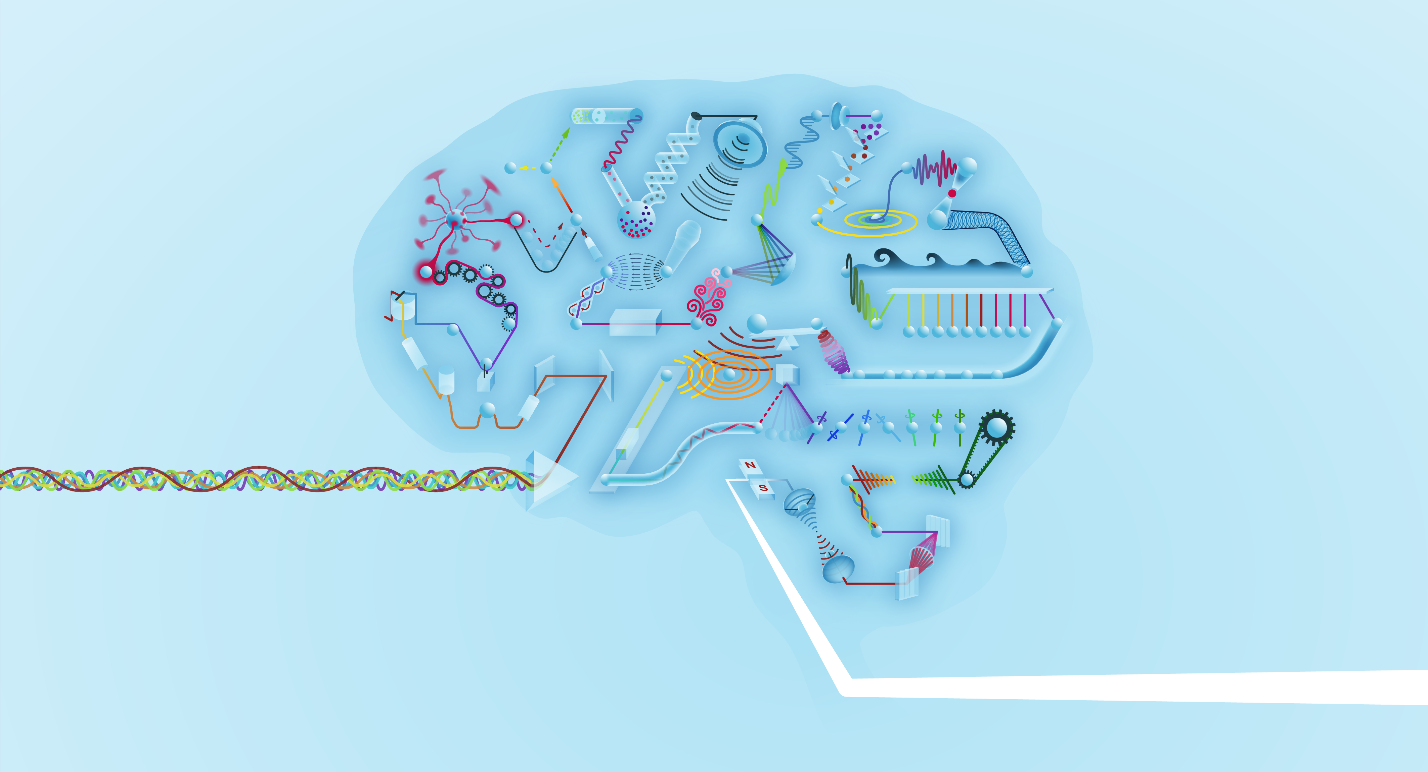

We found that almost any physical system can perform these simple operations if there is a way to send data in and out of the system. For example, we tried this on a loudspeaker: Data can be injected in the form of electrical signals, just like when playing music. The loudspeaker provides complex oscillations of the membrane—essentially amplifying some sound frequencies and attenuating others. Data can be read out of the system by recording the sound with a microphone. The attenuation and amplification of features is an operation that can be used for neural network computations. We demonstrated that a loudspeaker, a hobbyist electrical breadboard, and an optical laser system can each help perform neural computations in this way. We don’t need to precisely engineer an analogy; many physical systems are a good analog model for a neural network! Of course, many other physical systems are not—an important research question is to determine which systems are the best choice.

The technical breakthrough that allowed us to turn these systems into physical neural networks was the development of an algorithm that automatically trains controllable parameters of a physical system, such as its shape, the forces applied to it, or a control signal. The algorithm can simultaneously train many physical parameters in a network of connected physical systems. This allows various physical systems to cooperate and break down a complicated task into many successive simple operations, just as in modern artificial neural networks.

Such physical neural networks offer some intriguing possibilities for scientists in the near term. So far, scientists have been restricted to a very narrow class of physical systems with which to build computers for neural networks. Our work hugely broadens that class to systems that were never thought of as computers, including some that could be much faster and more energy-efficient than current computers. Physical neural networks can also directly interact with the physical world to make, for instance, cameras that work more like our eyes, microphones that work more like our ears, etc.

Different computing paradigms besides electronic digital computing are poised to undergo a renaissance with the rise of neuromorphic computing. Our brains are living proof that highly efficient analog computers operating beyond the capabilities of modern digital computers must be possible. We hope our work is an inspiration for scientists working in this direction.

Original Article:

Wright, L. G., Onodera, T., Stein, M. M., Wang, T., Schachter, D. T., Hu, Z., & McMahon, P. L. (2022). Deep physical neural networks trained with backpropagation. Nature, 601(7894), 549–555. https://doi.org/10.1038/s41586-021-04223-6

Maths, Physics & Chemistry

Maths, Physics & Chemistry